The academic publishing industry may need to peer-review their own regulations in light of this revelation. While AI can be used to detect plagiarism and look out for any forms of data fabrication, AI-based technologies are also being used by researchers to write research entries in their behalf, which then make its way into appearing in reputable journals, which is now putting their credibility in question.

In an X (Twitter) thread created by a user named @itsandrewgao on August 10th, it was revealed that certain scientific research papers from diverse interdisciplinary fields, which had been published in peer-reviewed journals, were co-written by AI, which @itsandrewgao had scoured through numerous academic database. The authors of the papers have left behind entries by a key “co-author”–an AI, which almost always indicates itself with the line “as an AI language model.”

According to the source, these research papers can be found in the areas of commerce, environment, humanities, robots, diplomacy, among others. Many were surprised that these papers were actually approved by peer reviewers of the said journals.

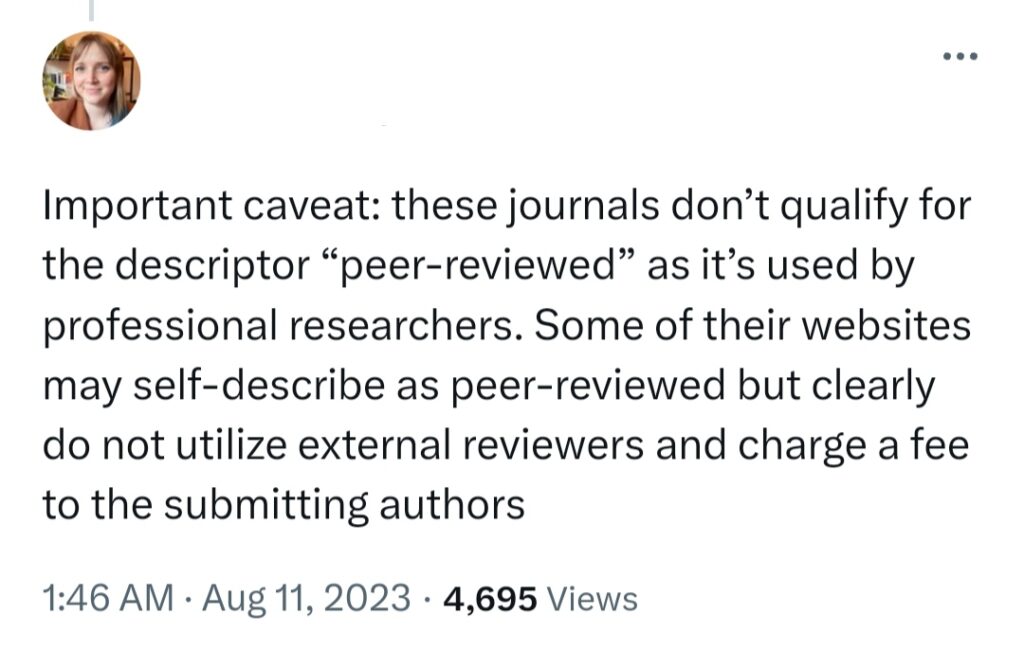

While many were shocked, some claimed that these journals aren’t exactly the standard for peer-review, and that they could be fake and predatory.

However, one of them is proven to be under ElsevierConnect, a Scopus-indexed journal that has been serving the global research community since 2000 with about 99% of the Nobel Laureates in Science.

Now that there’s an increasing threat in terms of ethics and accuracy of scholarly literature, managers of other journals are eyeing the policy of asking authors to disclose their use of generative artificial intelligence. Several journals such as Nature and all Springer Nature journals, Committee on Publication Ethics, and the JAMA Network are banning AI language models or ChatGPT as the co-author.

In January, the Stanford University team created the algorithm known as “DetectGPT” to predict the likelihood of a sample generated from computer systems. Yet, this needs more enhancement in the future before building up robust results.

Other POP! stories that you might like:

‘Job or Task Scam’: Don’t fall prey to this Ponzi-style scheme

Paramore calls off remaining North American tour dates because of Hayley Williams’ lung infection