On December 15, Thursday, Meta released a report of their 2022 Coordinated Inauthentic Behavior Enforcements, discussing their efforts against covert influence operations across its platform, particularly Facebook.

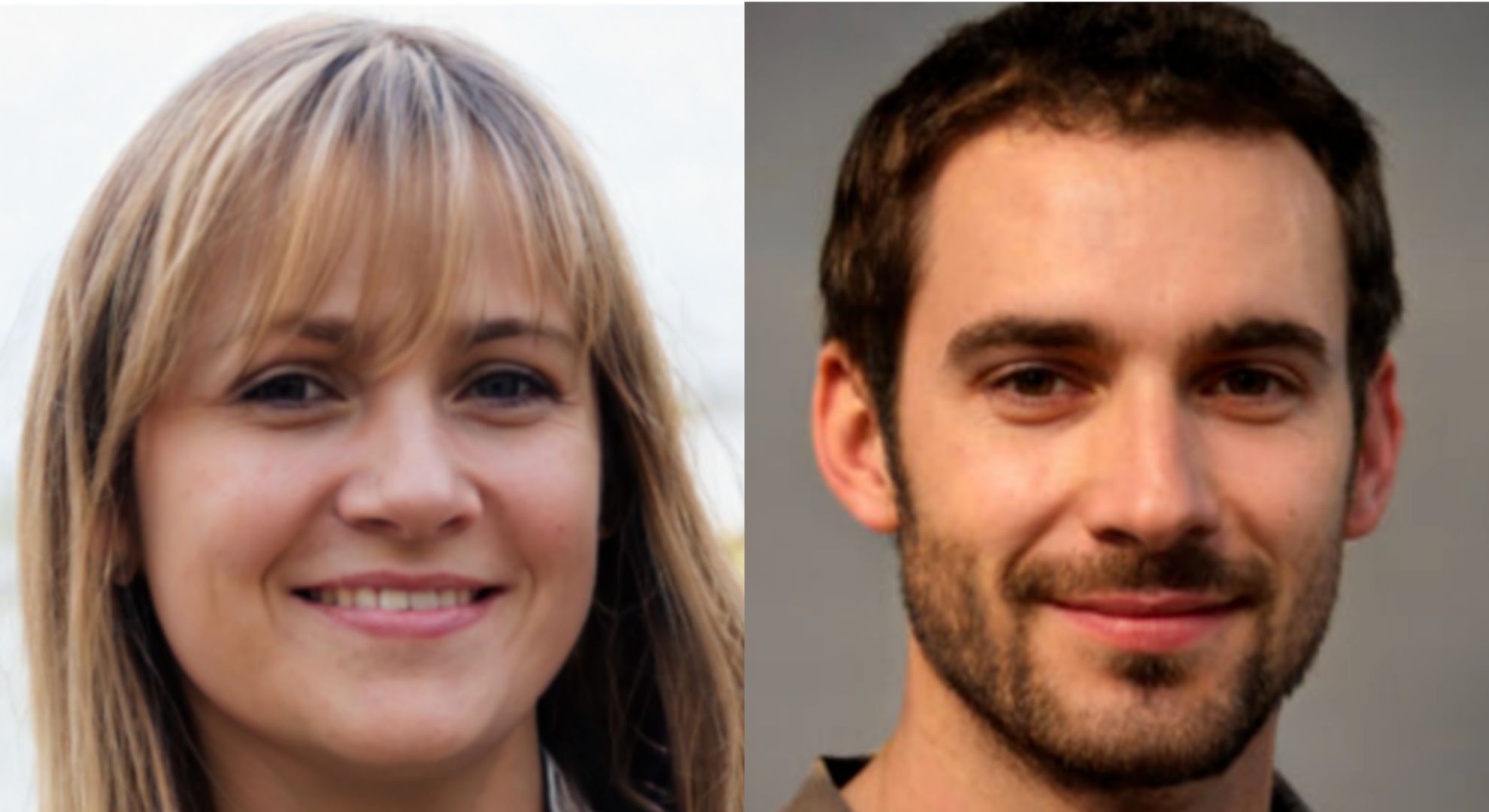

According to the report, there has been a “rapid rise” in the number of networks that use profile photos generated through AI (artificial intelligence) techniques such as generative adversarial networks (GAN). This technology is free to be utilized by anyone on the internet, including threat actors and online predators, to create a fake but unique photo of a “human.”

Meta has made efforts to disrupt more than 200 global networks for violating their Coordinated Inauthentic Behavior (CIB) policy since their public reporting in 2017.

The United States, followed by Ukraine and the United Kingdom, was the most targeted country by global CIB operations that the social media company has disrupted over the past years.

“More than two-thirds of all the CIB networks we disrupted this year featured accounts that likely had GAN-generated profile pictures, suggesting that threat actors may see it as a way to make their fake accounts look more authentic and original,” META states in the public report.

In an interview with CBS, one of the writers of the report and the Global Threat Intelligence Lead, Ben Nimmo explained to the audience that the photos that they are disrupting are “basically photos of people who do not exist. It’s not actually a person in the picture. It’s an image created by a computer.”

See some sample photos photos below:

Meta investigators at the social media company look at a “combination of behavioral signals” to identify the GAN-generated profile pictures.

Although these photos are somewhat deceptive, Nimmo identified some telltale signs: their eyes are perfectly aligned, their eyes do not have reflections, their clothing is unusual, their backgrounds appear artificial or edited, and they have peculiarities in their ears and hair.

Meta has been continuously making efforts to disrupt fake accounts made through AI. From 2016 to October 2021, it was reported that the company invested more than $13 billion in safety and security teams composed of over 40,000 individuals specifically assigned to moderation, or “more than the size of the FBI.”

Other POP! stories that you might like:

AI generated art is problematic and should never be supported by anyone

Here’s why popular YouTuber Captain Disillusion is no longer debunking fake videos

The memefication of ‘Barbie’: ‘Barbie’s first trailer sets social media abuzz